The use of artificial intelligence and machine learning is now widespread with wide-ranging practical applications in many Earth science domains, including climate modeling, weather prediction, and volcanic eruption forecasting. This current revolution in computing has been driven largely by rapid improvements in computer software and algorithms.

“We’re now approaching a second computing revolution of redesigning our hardware to meet new computing challenges.”

But now, we’re approaching a second computing revolution of redesigning our hardware to meet new computing challenges, said Jean Anne Incorvia, a professor of electrical and computer engineering at the University of Texas at Austin.

Traditional silicon-based computer hardware (like the computer chips found in your laptop or cell phone) has bottlenecks in both speed and energy efficiency, which may impose limits on their use in increasingly intensive computational problems.

To break these limitations, some engineers are drawing inspiration from biological neural systems using an approach called neuromorphic computing. “We know that the brain is really energy efficient at doing things like recognizing images,” said Incorvia. This is because the brain, unlike traditional computers, processes information in parallel, with neurons (the brain’s computational units) interacting with one another.

Incorvia and her research team are now studying how magnetic—not silicon—computer components can mimic certain useful aspects of biological neural systems. In a new study published in April in the journal Nanotechnology, her team reports that physically tuning the magnetic interactions between the magnetic nanowires could significantly cut the energy costs of training computer algorithms used in a variety of applications.

“The vision is [creating] computers that can react to their environment and process a lot of data at once in a smart and adaptive way,” said Incorvia, who was the senior author on the study. Reducing the costs of training these systems could help make that vision a reality.

Lateral Inhibition at a Lower Cost

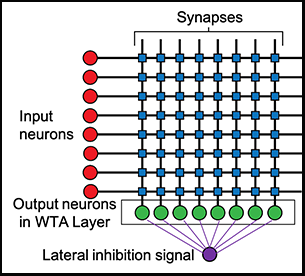

Artificial neural networks are one of the main computing tools used in machine learning. As the name implies, these tools mimic biological neural networks. Lateral inhibition is an important feature of biological neural networks that helps improve signal contrast in human sensory systems, like vision and touch, by having more active neurons inhibiting the activity of the surrounding neurons.

Implementing lateral inhibition into artificial neural networks could improve certain computer algorithms. “It’s preventing errors in processing the data so you need to do less training to tell your computer when it did things wrong,” said Incorvia. “So that’s where a lot of the energy benefits come from.”

Lateral inhibition can be implemented with conventional silicon computer parts—but at a cost.

“There is peripheral circuitry that is needed to implement this functionality,” said Can Cui, an electrical and computer engineering graduate student at the University of Texas at Austin and lead author of the study. “The end result is that as the size of the network scales up, there’s much more difficult[y] in the design of the circuit and you will have extra power consumption.”

Researchers found that when nanowires are placed parallel and next to one another, they can produce lateral inhibition without additional circuitry.

Researchers in the new study are using magnetic nanowires and their innate physical properties to more efficiently produce lateral inhibition. These devices produce small, stray magnetic fields, which are typically a nuisance in designing computer parts because they can disturb nearby circuits. But here, “this kind of interaction might be another degree of freedom that we can exploit,” Cui said.

By running computer simulations, the researchers found that when these nanowires are placed parallel and next to one another, the magnetic fields they produce can inhibit one another when activated. That is, these magnetic parts can produce lateral inhibition without additional circuitry.

The researchers ran further computer modeling experiments to understand the physics of their devices. They found that using smaller nanowires (30 nanometers wide) and tuning the spacing between pairs of nanowires were key to enhancing the strength of lateral inhibition. By optimizing these physical parameters, “we can achieve really large lateral inhibition,” said Cui. “The highest we got is around 90%.”

The Challenge of Real-World Applications

Training machine learning algorithms is a large time and energy sink, but building computer hardware with these magnetic parts could mitigate those costs. The researchers have already built prototypes of these devices.

However, there are still open questions about the practical applications of this technology to real-world computing challenges. “While the study is really good and combines the hardware-software functionality very well, it remains to be seen whether it is scalable to more large-scale problems,” said Priyadarshini Panda, an electrical engineering professor at Yale University who was not involved in the current study.

But the study is still a promising next step and shows “a very good way of matching what the algorithm requires from the physics of the device, which is something that we need to do as hardware engineers,” she added.

—Richard J. Sima (@richardsima), Science Writer

Citation:

Sima, R. J. (2020), The future of big data may lie in tiny magnets, Eos, 101, https://doi.org/10.1029/2020EO144681. Published on 02 June 2020.

Text © 2020. The authors. CC BY-NC-ND 3.0

Except where otherwise noted, images are subject to copyright. Any reuse without express permission from the copyright owner is prohibited.