Earth still shook during 2020’s pandemic lockdowns.

Vitor Silva recalled a request to his organization from Italy’s Civil Protection Department in March.

“One of the directors said, ‘Look, there’s this global pandemic. It’s going to affect all the countries. There are a lot of uncertainties when it comes to earthquakes, but we know for sure that within the span of a year, we’re going to have earthquakes and, yes, some of them are going to be destructive.…So should we try to get ahead and identify the places where there’s a higher likelihood of earthquakes happening and there is also a good likelihood [of having] a huge impact in terms of COVID?’”

Scientists make connections between COVID-19 and earthquake vulnerability in the aftermath of an earthquake disaster, when displaced people are often relocated to shelters where proximity to other victims puts them at increased risk of diseases.

This query was not completely out of the blue for Silva, who is the risk coordinator at the Global Earthquake Model Foundation (GEM), a scientific organization based in Pavia, Italy, whose mission is to calculate and communicate earthquake risk. In August, Silva published a study modeling the potential impact seismic events may have on coronavirus infection rates. He also created a map of high risk areas by combining models on seismic activity and the potential economic costs of building damage with data from Johns Hopkins University on the number of COVID-19 cases in each country. Since the map was created in April, “We had destructive earthquakes in Mexico, in Greece, and in Croatia,” Silva said. When Silva went back to check the map, he found that all those places had been highlighted as having the highest risk for earthquakes and COVID-19.

“It’s almost like I want to say, ‘I told you so,’” Silva said. “I think this map can help national authorities by looking at different locations where they should basically change their plans.”

The pandemic has changed how governments deal with natural disaster preparedness. In a nonpandemic year, countries may have plans for relief following earthquakes, floods, and landsides, but “these plans involve putting a lot of people in closed spaces,” Silva said, including “temporary shelters, which a lot of times are tents or hotels or gymnasiums. That’s probably no longer acceptable now in the time of COVID.”

Silva’s research highlights the importance of understanding the intersection of geoscience modeling with human systems such as settlement patterns, economics, and migration; knowing where and who the people affected are is essential for effectively modeling natural hazards.

GEM is a model for how scientists are modeling the processes of Earth with human equity in mind.

Collaboration and Openness in Modeling Natural Hazards

Since its founding in 2009, GEM has been carrying out a broad spectrum of activities that support its “mandate of making the world more resilient to earthquakes,” said Marco Pagani, the hazard team coordinator at GEM.

To carry out its mission, GEM’s modeling work is divided among three core teams. Pagani’s Hazard Team assesses the geophysical phenomena of seismic hazard: Where, how much, and how frequently is the ground going to shake? The Risk Team then figures out what is going to be exposed and vulnerable to that earthquake hazard: Where are the buildings and people, and how vulnerable are they to harm? The Social Vulnerability Team assesses the resiliency of communities to earthquakes on the basis of socioeconomic indicators such as the Human Development Index, the number of hospital beds per capita, and crime levels.

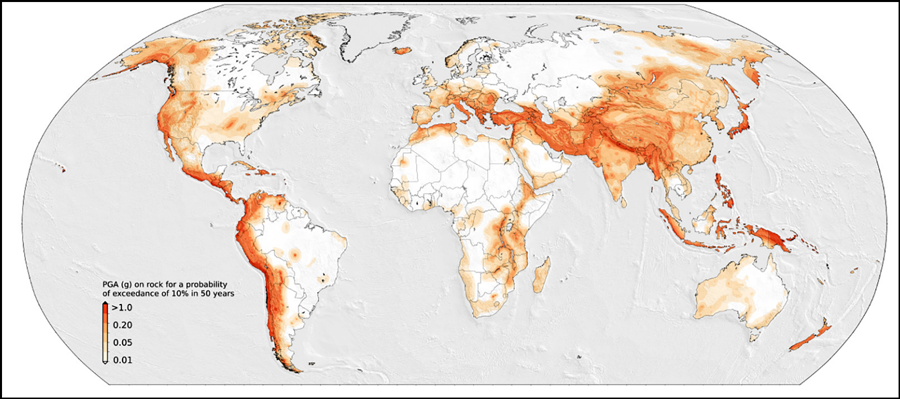

In 2019 GEM published its first comprehensive map of seismic hazard around the globe, which is the result of an extensive collaborative effort to combine the probabilistic seismic hazard models of many different nations and regions of the world. The organization has continued to update the components of the map since its release.

“It’s a dynamic compilation that is able to receive the new models that are made available,” said Pagani.

This flexibility is due to OpenQuake, an open-source hazard and risk calculation tool developed by GEM. The OpenQuake engine is the computer code backbone of GEM. OpenQuake code undergoes extensive testing, Pagani said, which makes the tool ready to accept contributions from a large modeling community around the world.

“OpenQuake is almost a bit of a Frankenstein because we had to consider functionalities of a lot of tools that exist out there,” Silva said. The engine is being refined and improved on the basis of community feedback and specific project needs. “We have several projects with several governments, bilateral collaborations, international partnerships, and we had to improve this tool in order to meet the requirements.”

In a way, the researchers said, OpenQuake embodies GEM’s ethos of collaboration, openness, and transparency.

“First of all, it’s free: You can go right now to download it and use it. Second, it’s completely transparent, so you can actually open it and see the methodology, see line by line to really understand what it’s doing. And third, you can provide your own routines, your own algorithms, you can write your own pieces of code and provide this to the community,” Silva said.

Modeling Risk on the Ground

Silva’s Risk Team develops exposure models to figure out what human systems are going to be exposed to the earthquake hazard. That is, what are “the buildings, the bridges, the people, basically everything that is exposed to the seismic hazards?” said Silva.

However, the amount and the quality of data available about buildings vary from country to country, which in turn affects what kinds of information can be input into the risk model.

“If I start with countries like the United States, like Canada, like New Zealand, like Australia, like Switzerland, these countries actually have extremely complete catalogs of the built environment,” Silva said. “So there’s information about where the buildings are, the main construction materials, how many stories, construction age. All this information, it’s available in public databases.”

“When we start running the risk analysis, what we’re doing is simulating many, many, many earthquakes, basically millions of possible earthquakes that might happen in the future.”

Other nations, many in underdeveloped regions of central Asia and Africa, are less likely to have exact information about the buildings within their borders. But they may have socioeconomic data—population locations, economic composition—that can be used to estimate how many buildings can be expected in different locations. “There is more uncertainty, but it is still possible for us to come up with something,” Silva said.

Finally, there are countries for which almost no building information is available. “In this case, we’ve been using a lot of new technologies, like remote sensing satellite data or OpenStreetMap data,” Silva said. Using satellite data, it is possible to understand where urbanized areas are, for example, and estimate the average sizes or heights of buildings in those areas. “It’s obviously much more time-consuming but something that is necessary to do for some countries.”

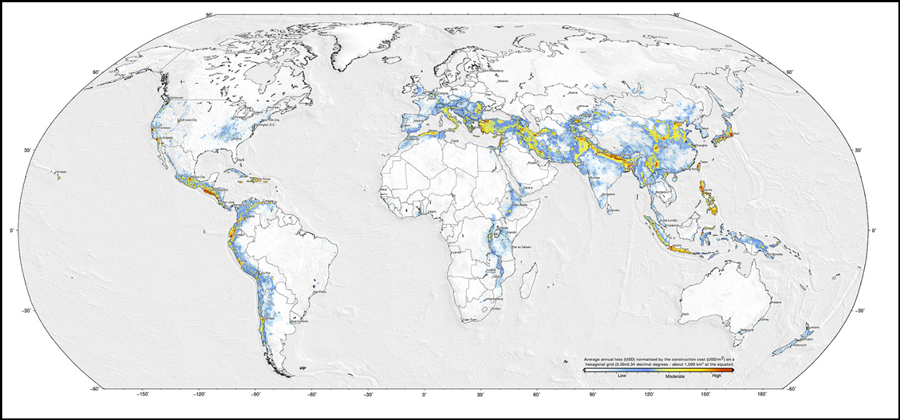

All told, the global exposure model includes 1.3 billion residential, 90.9 million commercial, and 35.5 million industrial buildings. The estimated price tag of all that real estate? About $203.6 trillion.

“We need to make sure that all of this information is captured on the exposure model,” said Silva. “When we start running the risk analysis, what we’re doing is simulating many, many, many earthquakes, basically millions of possible earthquakes that might happen in the future.”

For each simulated earthquake, GEM estimates the shaking on the ground, the damage to the buildings, and the expected losses. After considering all the different seismic events and their probabilities (an M9 earthquake is fortunately much rarer than smaller seismic events), the risk analyses generate the probabilities of exceeding different levels of loss. The model also takes into account the vulnerability, or fragility, of the affected buildings.

“For a lot of the countries, this is the first time that an open-sourced risk model was available for the country, which is a fundamental tool for people to start investing in disaster risk management measures,” said Silva. For example, GEM provided modeling and data for northern Africa, western Africa, northeastern Europe, northeastern Asia, Mexico, and the Korean Peninsula. Recently, GEM was also added to the Nasdaq Risk Modelling service to better inform financial and insurance markets.

Limits of Census Data

Before GEM came along, there were other global seismic risk models, such as the Global Seismic Hazard Assessment Program, which was developed in 1992. These older models tended to have vulnerability and exposure estimates that were not tailored to specific counties. “Our knowledge, technology, and tools evolved a lot since the ‘90s,” Silva said.

Despite the importance of building and demographics data for risk modeling, it is still difficult to get some crucial information directly through most national censuses, meaning that this information often needs to be gathered elsewhere.

Having information on how buildings were constructed is crucial for modeling their vulnerability to natural hazards, said Nicky Hastings, the risk assessment project lead at Natural Resources Canada. “Wood has a lot of flexibility, for example. Some of the more rigid structures of the unreinforced masonry obviously don’t flex as well when you have ground shaking.… And that’s very different from, say, a flooding event. A wooden structure does not do well in flooding events, but a concrete structure will.” Hastings and her team are developing seismic risk as well as coastal flooding models for Canada.

Unfortunately, the Canadian census, which is taken every 4 years, does not include information on the construction of buildings. “So it’s information that we have been developing over the years and using some different methodologies to collect and then to develop algorithms to understand,” Hastings said.

Before the advent of Google Maps, Hastings’s collaborators at the University of British Columbia collected information about different types of construction by simply walking by and observing buildings from the outside. Now they are able to use photographs on Google Street View, which “was a really big advance to help us get the construction types and get a sense of what that looks like,” Hastings said.

Hastings and her colleagues are in the process of developing an open-source flood risk modeling tool. “I think there’s still a long way to go. It’s not the same level as the seismic model is,” she said.

Hastings’s team has created consistent and standardized maps that could be applied to natural hazards modeling. In the United States, a new database allows property owners to get an easy-to-understand indicator of the potential for flooding now and over the next several decades.

“My dream is to do the same kind of thing with the various geohazards,” Hastings said.

The date buildings were constructed also matters, because building codes have changed over time, said Helen Crowley, a seismic risk consultant at the nonprofit Eucentre. Crowley and Eucentre recently helped develop and publish the exposure model for European seismic risk in close collaboration with GEM and as part of the European Commission’s Horizon 2020 Seismology and Earthquake Engineering Research Infrastructure Alliance for Europe project.

Understanding when seismic design codes were introduced and enforced helps develop the model to better reflect the vulnerability of different buildings. For example, after the 1908 Messina earthquake, Italian design codes recommended that buildings be able to withstand more lateral force. However, those building codes were initially required only in areas where earthquakes had occurred but not across the whole of Italy. But as more earthquakes occurred over the following century, more municipalities required seismic design codes. Records of adoption and implementation of seismic design codes varied within a single country, therefore, as well as across international borders.

“The problem is that every country has slightly different data in their census,” Crowley said. “So one country will tell us the number of dwellings, they might tell us about the number of buildings, there might be information on the height of those buildings, the age of those buildings, and in some cases, the external material.”

Hastings and Crowley both also want to investigate the movement of people when assessing seismic vulnerability because the distribution of the population changes throughout the day as people commute from home to work and back.

“We want to use that data in the future and look at how people move during the week, during seasons,” Crowley said. “Obviously, where people are in the summer, where they are in the winter is very different. So these are all things that we want to add to the model in the kitchen, but by now we don’t have that in our model.” These types of data also are not captured in a census.

“The [U.S.] Census itself has hardly anything,” said Bruce Spencer, a statistician at Northwestern University who has participated in evaluations of population estimates by the Census Bureau. “I think the first step is for the natural hazards community to think about what information they would like to get their hands on for which geographic areas, and then to try to see where we can find that data.”

The American Community Survey, an ongoing demographic survey program from the U.S. Census Bureau, may include more detailed information than the annual decadal census, with a higher temporal resolution of updated results each year. Researchers outside the government, however, typically won’t have access to that level of detail to protect the confidentiality of the data, Spencer said. It is possible to collaborate with the Census Bureau and have them run a more in-depth analysis through the Research Data Center, which allows qualified researchers access to more detailed data than the bureau would normally release.

“It is a way to, for statistical purposes, use very fine grained data,” Spencer said. “So if you wanted to look at disparities in risk profiles, going down to a very fine grained geographic level, you could do that. You wouldn’t be able to report it for an individual city block, but you could report it for large aggregates of city blocks to say these populations are at higher risk.”

In addition, the U.S. Department of Housing and Urban Development sponsors the American Housing Survey, which is conducted every other year and provides up-to-date information about the physical condition of homes and neighborhoods, the cost of maintaining homes, and who lives in those homes.

“I think that this is a very ripe area for people working in natural hazards and people more familiar with social science and social surveys to collaborate on,” Spencer said.

Social Vulnerability

In addition to modeling how natural hazards pose risk to buildings, scientists are beginning to assess the differing social vulnerabilities populations have in recovering from natural hazards.

GEM has a small team dedicated to assessing social vulnerability following a seismic event. In calculating the direct costs of seismic events, “we’re talking about dollars, and we’re talking about people,” Silva said.

By collecting many socioeconomic indicators at the global scale, such as the Human Development Index (used by United Nations Development Programme and including factors such as per capita income, life expectancy, and education levels), crime levels, and the number of hospital beds per capita, GEM was able to create a composite indicator for social vulnerability that could help prioritize resources to assist areas with both high direct risk of seismic events and social vulnerability.

Mojtaba Sadegh, a civil engineering professor at Boise State University whose research has focused on flooding, drought, and wildfires, uses income as a proxy for vulnerability because wealth can buffer against some of the effects of these hazards. For example, the smoke caused by wildfires kills more people than the fires themselves, but those with the economic means can afford HEPA filters and advanced air conditioning to mitigate their effect, Sadegh said. These human factors need to be incorporated into hazard modeling.

Although social vulnerability indexes can help map where the most and least vulnerable areas are, practitioners find it hard to use this information, said Jackie Yip, a coastal risk scientist at Natural Resources Canada. “If you don’t know why a neighborhood is vulnerable, then how do you reduce their vulnerability?”

Vulnerability is made up of many dimensions, and the factors driving it are dependent on both the context and the type of hazard, Yip said.

Armed with Canadian census data, Yip is using machine learning computer algorithms to find patterns in what drives social vulnerability across different neighborhoods based on indicators related to housing conditions, financial agency, social integration, and individual autonomy.

This neighborhood archetype model shows different neighborhood profiles of why a place may be more likely to be vulnerable; one neighborhood may have a higher concentration of elderly and low-income residents, whereas another may have more residents who don’t speak English or French as their first language, which suggests different challenges for recovering from a natural disaster.

“We are not trying to label these neighborhoods as these things,” Yip said. “We are just giving a high-level view of what is driving social vulnerability” to help practitioners prioritize where to focus.

Integrating social sciences is “something that is often lacking in the hazard field because it is usually done by engineers, but they don’t have the training toward the social side,” Yip said.

So far, this work is still very top-down and has been implemented only in British Columbia, but Yip plans to expand the approach to all of Canada after establishing whether the model reflects reality on the ground by working with people living in these neighborhoods.

“We can’t have just a data-driven [model] about people without talking to people,” Yip said.

Sadegh agreed. “The purpose of any model is to improve human livelihood and to save human lives. At the end of the day, this is the ultimate goal.”

Hazards and Models Crossing Borders

To achieve better equity in natural hazard modeling, the process needs to be collaborative and global, experts say. Natural hazards don’t stop at borders even if how they are modeled differs on either side.

When GEM works on building a model for a certain region, for example, it makes sure to first get in touch with the community leaders, Pagani said. “We try to engage them into those projects because we recognize the importance of working with local experts.”

Hastings and the Geological Survey of Canada are working with the Semiahmoo, a First Nation community on the Pacific coast, as part of an effort to understand the priorities and values different communities have surrounding coastal flooding. They are developing a guideline to set best practices, “because a model is a model, but you don’t know if it actually works until you test it in the community,” Hastings said.

The Semiahmoo collaboration “brings together some of the Indigenous Knowledge with the scientific knowledge, and it came about from that more holistic perspective,” she said.

The Semiahmoo were a community before there was a Canada-U.S. border. When the border was put in place, the community was severed, Hastings said. This separation was evident when, at a conference, a counselor stood up and mentioned the large differences in coastal flooding models produced by the U.S. side and those produced by the Canadian side. Now Hastings and her team are working with their U.S. counterparts at NOAA and the University of Washington to clarify what these differences are.

“We’re trying to model the world, right? And we’re trying to model the future of the world.”

“The model inputs are different, the modeling is different, and of course, there are political differences,” Hastings said.

“And you don’t realize it because you tend to work within your country until you start working together,” she added. “The really cool thing about this specific study is we’re really starting to learn, cross border, some of those big differences…and you may not be able to resolve them all right away, but at least you can bring some clarity in terms of what the differences are.”

Silva agreed. “The development of these models involved literally hundreds of people around the world. We did dozens of meetings and workshops,” he said. “We went to all these different places, we held meetings with people, we showed the results, we ran the calculations with them,” Silva said. “We identified a lot of mistakes, a lot of errors in the model by working with the local people. I also think that something that maybe differentiates [the GEM model] a little bit is the fact that it was a community effort.”

“We’re trying to model the world, right?” said Hastings. “We’re trying to model the future of the world.”

Author Information

Richard J. Sima (@richardsima), Science Writer

Citation:

Sima, R. J. (2021), Where do people fit into a global hazard model?, Eos, 102, https://doi.org/10.1029/2021EO154550. Published on 23 February 2021.

Text © 2021. The authors. CC BY-NC-ND 3.0

Except where otherwise noted, images are subject to copyright. Any reuse without express permission from the copyright owner is prohibited.