Editors’ Vox is a blog from AGU’s Publications Department.

Flood inundation models are tools that predict where water flows, how deep it gets, how fast it moves and how long it remains during a flood event. But despite recent advances in flood inundation models, some flood modeling paradigms are being used beyond their range of applicability rather than leveraging the strengths of different methods.

A new article in Review of Geophysics explores the strengths and limitations of different flood modeling methods and calls for an integrated approach to flood modeling. Here, we asked the authors to give an overview of flood inundation models, the challenges of “siloing,” and future directions for research.

In simple terms, how do flood inundation models work and why are they important?

Flood inundation models take inputs, such as rainfall, ground elevation, river flow, and infrastructure data, and simulate how flooding develops across a given area. In some ways, they function as a replica of the physical world, allowing modelers to approximate how a flood scenario may evolve.

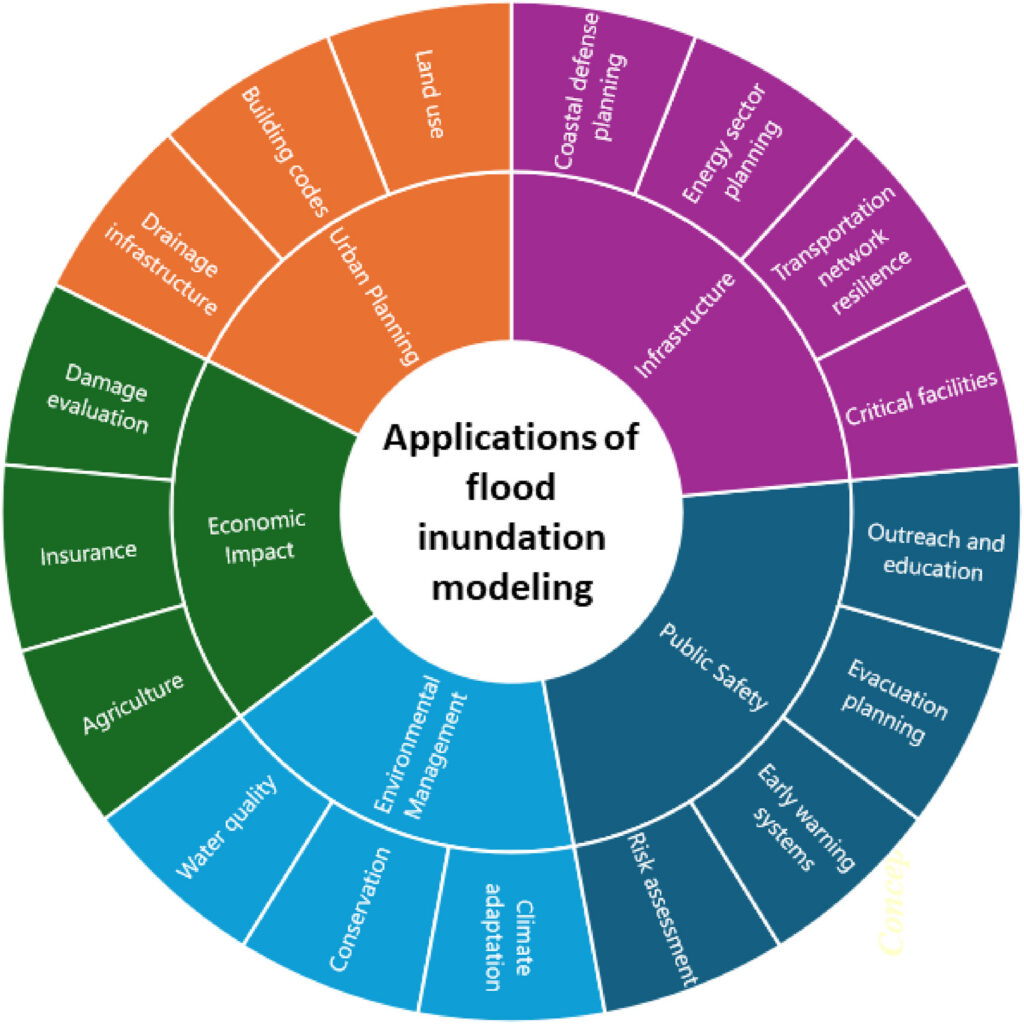

The models matter because they support decisions across a wide range of sectors. Emergency managers use them to plan evacuations and allocate resources. Engineers rely on them to design flood control infrastructure such as levees, bridges, and drainage systems. Regulatory agencies, like FEMA in the United States, use the models to delineate flood zones, which determine where properties are subject to flood risk. Flood inundation models also inform decisions related to public health, agriculture, insurance markets, transportation infrastructure, and environmental management, among many others.

How have flood inundation models evolved since they first started being developed?

Flood inundation models have evolved significantly over the past century, driven primarily by advances in mathematics, computational power, and data availability. Early models were relatively simple and could only track water moving in one direction along a channel. As the field advanced, models expanded to simulate how floodwater propagates across the broader landscape, including areas far beyond waterbodies.

The availability of high-resolution terrain data, remote sensing, and satellite imagery further transformed the field. Modelers could work with detailed representations of the landscape at regional, continental and even global scales, a scale that was computationally out of reach just decades earlier. High-performance computing made it possible to run complex simulations faster and over much larger areas.

Rather than these different approaches growing together and complementing each other, they increasingly develop in isolation.

More recently, the rise of data-driven approaches, artificial intelligence and machine learning, introduced an additional modeling paradigm, one that learns patterns from observed data rather than solving physical equations. These methods often offer computational efficiency in data-rich environments. However, this rapid diversification has also introduced a challenge. Rather than these different approaches growing together and complementing each other, they increasingly develop in isolation, each evolving within its own methodological boundaries. This divergence and what it means for the future of the field is a defining concern in flood modeling today.

What are the flood inundation modeling methods described in your review article?

Our review groups flood inundation modeling into four broad methods. First are computational models, which are physics-based models that numerically solve equations representing conservation of mass and momentum and are often very robust for representing flood dynamics. Second, with the rise of big data, artificial intelligence and machine learning algorithms proliferated. These methods can be fast and efficient, but they often rely heavily on data, lack physical constraints and offer limited generalizability beyond their training conditions, which is particularly concerning since those “unseen” conditions could be the very extreme events that matter far more than data-rich frequent and milder scenarios. Third are observational and experimental methods, which use field measurements, satellite data, and laboratory studies to describe or analyze flooding; these can help with calibration and validation but usually have limited predictive skill on their own. Fourth are conceptual models, which simplify flood behavior into transparent and efficient rules. These can be useful for planning and broad analyses, but they overlook important hydraulic details.

What is “siloing” in flood inundation modeling and why does it occur?

In our review, “siloing” refers to the tendency of different modeling approaches evolving independently within their own methodological boundaries, with limited exchange or integration across paradigms. A concern is substantial investment on methods with a limited scope, assuming that methods can ultimately overcome their own simplifications and replace other methods. This has particularly been observed in the push to use data-driven and remote sensing paradigms to replace physics-based models, rather than integrating their strengths. This can be due to several reasons. Different applications demand different levels of accuracy, efficiency, predictive skill, and computing power. Some methods are easier to use or better matched with available data. In other cases, modelers may be more familiar with one method than with alternatives, so they continue refining that method even when another approach could solve part of the problem better. Siloing also grows when simplified methods are adopted for convenience or justified by data limitations and computing power constraints, gradually being treated as full replacements for more physically grounded models.

What are some of the challenges that siloing presents?

Siloing slows progress by underusing the strengths of complementary methods.

Siloing creates both scientific and practical problems. One major challenge is that models may be pushed beyond the scope they were designed for. For example, some simplified or data-driven methods can miss key flood dynamics, such as backwater effects, transient flow behavior, meaning how floods change rapidly over time, or infrastructure controls, yet still be used in consequential decisions. Another problem is that siloing slows progress by underusing the strengths of complementary methods. Siloing also makes it difficult to objectively evaluate model assumptions because each modeling community tends to focus on improving its own methods rather than testing where those methods perform best and where they fall short.

What are the pathways for future research in flood inundation modeling?

The main pathway we propose is synergistic integration of various modeling; moving away from developing modeling methods in isolation and toward integrating them so that each method contributes what it does best. This means, for example, using simple or data-driven models to identify where detailed hydrodynamic modeling is most needed, leveraging satellite and field observations to improve other models’ inputs and calibration, and incorporating machine learning in ways that are guided by physical constraints rather than data alone. It also means investing more in physics-based models, experiments and data collection, such as detailed surveys of ground elevation and physical infrastructure, rather than defaulting to simplification as a substitute for that investment. Advances in high-performance computing make this level of integration increasingly feasible.

The goal is not to sacrifice physics just to arrive at faster or more convenient approaches.

The goal is not to sacrifice physics just to arrive at faster or more convenient approaches, but to develop actionable models that are physically grounded, reliable across a range of conditions, and informative for the decisions that depend on them. Advances across all these fronts can help close the gap between physical realism and computational efficiency, making integrated modeling not just an aspiration but an achievable practice.

—Behzad Nazari ([email protected], ![]() 0009-0000-5568-4735), The University of Texas at Arlington, United States; Ebrahim Ahmadisharaf ([email protected],

0009-0000-5568-4735), The University of Texas at Arlington, United States; Ebrahim Ahmadisharaf ([email protected], ![]() 0000-0002-9452-7975), Florida State University: Tallahassee, United States

0000-0002-9452-7975), Florida State University: Tallahassee, United States

Editor’s Note: It is the policy of AGU Publications to invite the authors of articles published in Reviews of Geophysics to write a summary for Eos Editors’ Vox.