In 2021, the United Kingdom experienced an extended period of windlessness; in 2022 it was struck by a record heat wave. At the same time, there were unprecedented flooding in Germany and massive forest fires in France. Are these events part of the violent opening salvo of the anthropogenic warming we’ve been warned about? When and where will they happen next? Climate scientists tend to demur. We just don’t know—yet.

For nearly 6 decades, climate models have confirmed what back-of-the-envelope physics already has told us: An increased concentration of greenhouse gases in the atmosphere is warming the planet. Dozens of models, produced by research institutions across the globe, have given visible shape to what lies ahead: time-lapse maps of the world turning from yellow to orange to blood red, ice caps disappearing beneath the ominous contours of temperature gradient lines.

Now, at what the Intergovernmental Panel on Climate Change (IPCC) has said is a critical moment for avoiding the worst outcomes, governments are saying, We’re convinced—now what do we do?

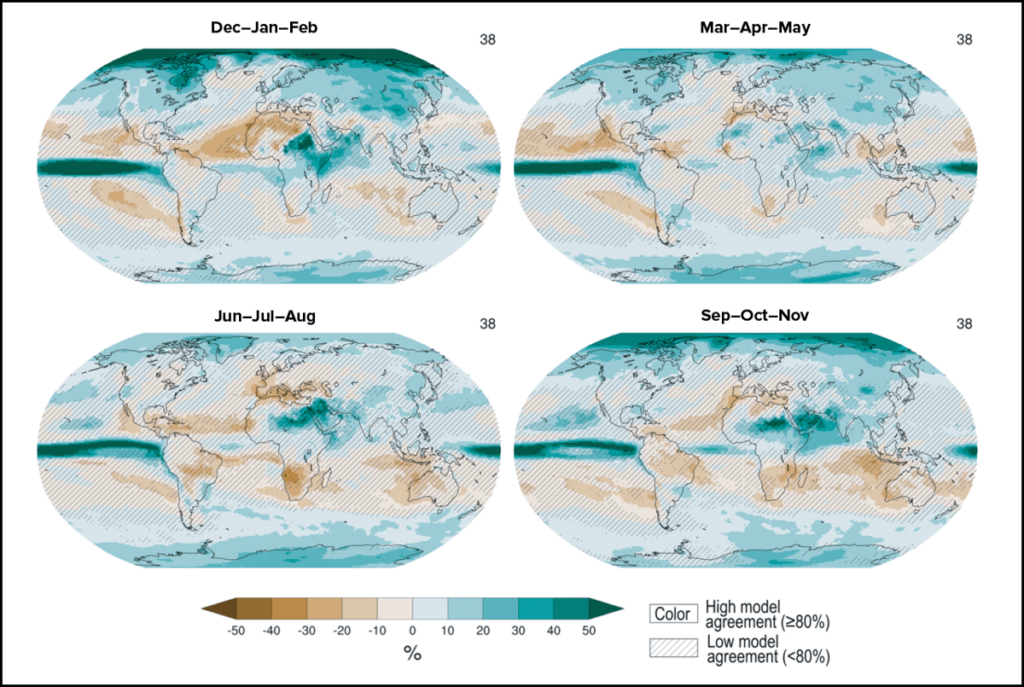

Answering that question is where our current models fall short. They show us how the planet is warming but not how that will affect the weather in a given city, or even country. Because the climate models rely, in a sense, on averages, they can’t predict the outliers—those extreme events with the most potential for destruction, and the ones we most need to prepare for. But current models can’t even determine whether some places will experience more droughts or floods, whether governments should build reservoirs or levees.

“A highly nonlinear system where you have biases which are bigger than the signals you’re trying to predict is really a recipe for unreliability,” said Tim Palmer, a Royal Society research professor in climate physics and a senior fellow at the Oxford Martin School.

It’s not that we don’t have the data—we just don’t have the power to process them fast enough to forecast changes before they become old news. And unlike short-term weather forecasting, longer-term climate forecasting involves incorporating many more physical processes, like the carbon cycle, cloud feedback, and biogeochemistry. “They all consume computer time,” said Palmer.

That’s about to change. This year saw a milestone in supercomputing when the world’s first exascale computer—capable of a quintillion (1018 ) calculations per second—came online in the United States. For the first time, scientists like Palmer have said, they’re about to be able to model climate on something close to the scale on which its driving processes actually happen.

Others have questioned whether accurate local climate predictions, especially decades into the future, are even possible, no matter how much expensive computing power is thrown at them. And behind the technical challenge is a logistical one, and ultimately a political one. The legion of government and university climate labs that produce climate models don’t have the resources to marshal humanity’s computational apex, and climate data themselves are siloed in a global hodgepodge of grant-funded research programs.

Palmer and a growing number of others have said climate scientists need to band together and make fewer models but ones with astronomically better resolution. The effort, embodied by a project the European Union (EU) announced this year called Destination Earth, would amount to a new moon shot. It won’t be easy, but it could pay off.

Another Earth

The Destination Earth initiative, nicknamed DestinE, was launched in March 2022 as part of the EU’s European Green Deal to become climate neutral by 2050. Proponents have said its potential spans to every corner of climate mitigation, from flood prevention to protecting water supplies to maintaining food production. The idea is to consolidate high-resolution models into a digital twin of Earth.

The concept of a digital twin, developed by the manufacturing industry to test and improve products more efficiently, describes a virtual replica of a physical object. Tesla, for example, builds digital twins of each of its cars based on a constant flow of sensor data, then uses analysis of the twin to continually update the real car’s software and optimize performance. Earth is a vastly more complex object, with too many interrelated systems ever to be fully accounted for in a digital twin. But DestinE will attempt to use a virtual Earth in much the same way, to forecast both how the climate will change and how our attempts to weather that change will hold up, in effect creating a virtual test lab for climate policy.

DestinE will ultimately involve several sets of twins, each focusing on a different aspect of Earth. The first two, which officials ambitiously expect to be ready in 2024, will focus on extreme natural events and climate change adaptation. Others, including those focusing on oceans and biodiversity, are planned for subsequent years. The twin concept will make it easier for nonexperts to make use of the data, according to Johannes Bahrke, a European Commission spokesperson. “The innovation of DestinE lies…in the way it enables interaction and knowledge generation tailored to the level of expertise of the users and their specific interests,” Bahrke wrote in an email.

The initiative is a collaboration between the European Centre for Medium-Range Weather Forecasts (ECMWF), which will handle building the digital twins themselves, and two other agencies: The European Space Agency (ESA) will build a platform that will allow stakeholders and researchers around the world to easily access the data the initiative produces, and the European Organisation for the Exploitation of Meteorological Satellites (EUMETSAT) will establish a “data lake” consolidating all of Europe’s climate data for use in the models.

The goal is to blend climate models and other types of data, including on human migration, agriculture, and supply chains, into one “full” digital replica of the planet by 2030. According to Bahrke, the initiative also plans to use artificial intelligence to “provide means to fully exploit the vast amounts of data collected and simulated over decades and understand the complex interactions of processes between Earth system and human space.”

It’s an ambitious goal, but at its core is what some have said will be a step change in how we see Earth systems. The key to its potential? Resolution.

Downscaling Models, Upscaling Physics

Climate models divide the globe into cells, like pixels on a computer screen. Each cell is loaded with equations that describe, say, the influx of energy from the Sun’s radiation, or how wind ferries energy and moisture from one place to another. In most current models, those cells can be 100 square kilometers or larger. That means the average thunderstorm, for example, in all its complexity and dynamism, is often represented by one homogenous square of data. Not only are storms the often-unexpected source of a flood or tornado, but also they play a crucial role in moving energy around the atmosphere, and therefore in the trajectory of the climate—they help determine what happens next.

“We’re often studying the impact of our approximations rather than the consequences of our physical understanding. And that, [to me] as a scientist, is very frustrating.”

But it’s no mystery how mountains force air upward, driving the vertical transfer of heat, or how eddies transport heat into the Southern Ocean, or how stresses in ice sheets affect how they tear apart. “We have equations for them, we have laws. But we’ve been sort of forbidden to use that understanding by the limits of computation,” said Bjorn Stevens, managing director of the Max Planck Institute for Meteorology in Hamburg, Germany, and a close colleague of Palmer’s. “People are so used to doing things the old way, sometimes I think they forget how far away some of the basic processes in our existing models are from our physical understanding.”

In any climate model, smaller-scale features are parameterized, which means they’re represented by statistical analysis rather than modeled by tracking the outcome of physical processes. In other words, the gaps in the models are filled through correlation rather than causation.

“We’re often studying the impact of our approximations rather than the consequences of our physical understanding,” said Stevens. “And that, [to me] as a scientist, is very frustrating.”

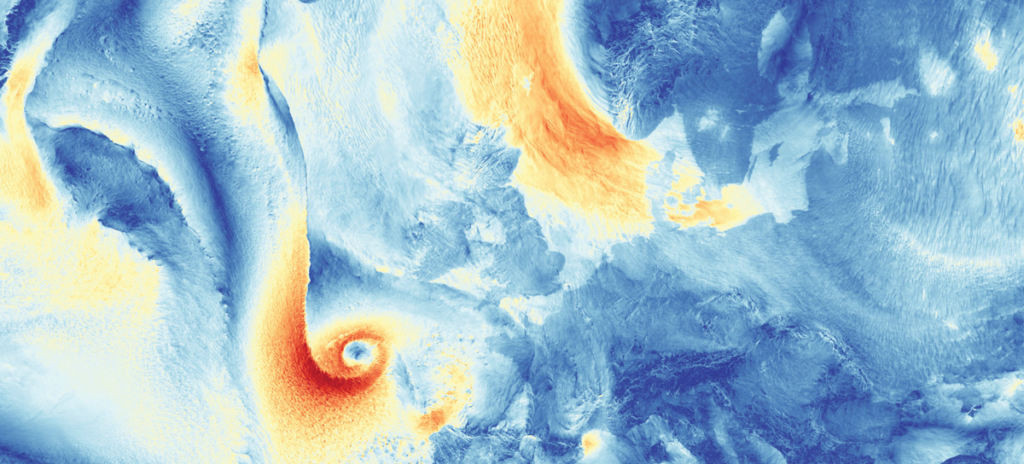

Exascale computers will be able to run models with a much higher resolution—with cells as small as 1 square kilometer—allowing them to directly model more physical processes that happen on finer scales. These include many of the vertical heat exchanges that drive so much atmospheric activity, making the new models what Stevens has called “fully, physically three dimensional.” The added processing power also will allow massively complex systems to be better coupled with each other. The movement of ocean currents, for example, can be plugged into atmospheric air currents and the variegated radiative and deflective properties of land features with more precision.

“We can implement that and capture so much more of how [such interaction] steers the climate,” said Stevens. “If you finally can get the pattern of atmospheric deep convection over the warm tropical seas to behave physically, will that allow you to understand more deeply how that then shapes large-scale waves in the atmosphere, guides the winds, and influences things like extratropical storms?”

Stevens said the prospect has reinvigorated the climate modeling field, which he thinks has lost some of its vim in recent years. “This ability to upscale the physics is what’s really exciting,” he said. “It allows you to take physics that we understand on a small scale and look at its large-scale effect.”

Laying the Groundwork

In late June 2022, more than 80 climate scientists and programmers gathered in a rented coworking space in Vienna, huddled in bunches around their laptops for a climate hackathon. The NextGEMS (Next Generation Earth Modelling Systems) project, funded by the EU’s Horizon 2020 program, had just completed a 4-month run of its latest model. At 5-kilometer resolution, the run yielded two simulated years of the global climate, and the hackers were hungrily digging into the output, looking for bugs.

“We’re happy when we find bugs. It’s easier to find them if more people look at [the model] from very different perspectives,” said Theresa Mieslinger, a cloud researcher and organizer of NextGEMS’ hackathons (the inaugural one took place in October 2021). Each NextGEMS event is a chance for climate modelers to work together preparing two models, the German-developed Icosahedral Nonhydrostatic Weather and Climate Model (ICON) and ECMWF’s Integrated Forecasting System (IFS), often referred to as the “European model” for weather prediction, for use in high-resolution modeling efforts like Destination Earth.

Finding bugs in the models is a natural result of running them at higher resolution, not unlike taking the training wheels off your bike only to find you’re not as good at balancing as you’d thought. Things are further complicated by the new ability to couple systems. A recent example: NextGEMS had connected the atmosphere to the ocean in a way that extracted too much energy from the ocean. “Suddenly models which behaved tamely at coarse resolution developed winds blowing in the wrong direction,” said Stevens, who serves as a guiding presence at the hackathons. He was far from disappointed by the error. “It can feel like riding a bronco rather than a merry-go-round pony, which is scientifically exhilarating.”

Part of the hackathons’ goal is to train modelers in handling the sheer volume of data involved in high-resolution modeling. Even at a resolution of 5 kilometers, the output of NextGEMS’ latest run is about 1 terabyte per simulated day, and a model should be run many times to average out noise.

Knowing how to handle the data is one challenge. Receiving them from the supercomputer is another. DestinE’s fix for that transmission challenge will likely be to publicly stream the data from high-resolution models as they run, rather than having to store them on disk. Anyone could design applications to identify only the data they need to answer a specific question. Stevens likened this to fieldwork in which researchers place instruments out in the world to make observations. In this case, the instruments (apps) would be observing aspects of the world’s digital twin as it spins through its kaleidoscopic changes in fast motion.

Making the data public would also prevent users from having to seek help with a model run tailored to their needs. “I really like this idea that it’s not an individual thing, which often depends on some connections that one person has to another or a group of scientists,” Mieslinger said. “Streaming democratizes data access. It allows everyone on our globe to access the information that climate decisions are based on.”

Applied Physics

Among the NextGEMS hackathon’s attendees were representatives from the clean energy industry, just one of many that need climate data: Without detailed information about how reliable clean energy sources are, and how susceptible they are to damage from extreme weather, the industry will struggle to establish a stable electrical grid.

The evidence of that is already plain; in 2021 the United Kingdom experienced its lowest average wind speeds in 60 years. That was bad news for the country’s wind farms—wind power is generated as the cube of wind speed, so even a 10% reduction in wind speed means nearly 30% less power. Windlessness was also bad news for the four nearby countries (Belgium, Denmark, Germany, and the Netherlands) that recently had pledged to increase wind generation capacity in the North Sea tenfold by 2050. No one saw the doldrums coming.

“Before the wind drought, the kind of question I was being asked was, ‘Where’s the windiest place around Europe to put these wind turbines?’” said Hannah Bloomfield, a climate risk analyst based at the University of Bristol. “But then the question kind of changed a bit after we had a wind drought.” Wind farmers started asking where there was wind even during the drought. It was as if they were realizing in real time that change is the new normal.

Bloomfield said she had trouble answering the wind farmers’ questions without higher-resolution models. “You see a lot of studies where they want to represent activity at an individual wind farm or a power plant, but they’re having to use, say, 60- to 100-kilometer grid boxes of climate data to do it.” The vertical resolution of the new exascale versions could be especially key in this instance: Current models can’t directly solve for wind speed extremes at the height of most wind turbine rotor hubs, around 100 meters.

Palmer said the new models also could help determine the root causes of regional changes in wind, and so better predict them. The phenomenon called global stilling, for example, is a result of the Arctic surface warming faster than the middle latitudes. The discrepancy reduces the temperature gradient between the regions and slows the wind that results as the atmosphere tries to balance things out. But the upper atmosphere in the Arctic isn’t warming as fast as the surface; the winds in Europe could have died down for another reason. Palmer believes the new models are poised to reveal an answer.

“If you’ve got a lowresolution model, it might make [the floodprone region] look like a bigger area than it is, or it might just miss the flood entirely.”

Bloomfield also works with insurance companies that are trying to improve the accuracy of their risk assessments. For example, sharper models could help them price premiums more appropriately by making it clear who lives in a flood-prone area and who doesn’t. “If you’ve got a low-resolution model, it might make [the flood-prone region] look like a bigger area than it is,” she said, “or it might just miss the flood entirely.”

Researchers like Bloomfield are the sinews connecting industry stakeholders, policymakers, and the complex science of climate modeling. But as climate becomes part of nearly every decision made on the planet, they can’t continue to be. Bloomfield, who is part of a group called Next Generation Challenges in Energy-Climate Modelling, recognized the importance of both educating stakeholders and making climate modeling data more accessible to everyone who needs to use them.

“This stuff is big, right? It’s chunky data. Although you can now produce [them] with this exascale computing, whether industries have caught up in terms of their capabilities to use [them] might be an important factor for projects like [Destination Earth] to think about,” Bloomfield said.

Delivering on the Promise

Stevens agreed that university labs can’t continue to carry the responsibility of lighting the way forward through climate change. “It’s ironic, right? What many people think is the most pressing problem in the long term for humanity, we handle with a collection of loosely coordinated research projects,” he said. “You don’t apply for a research grant to say, ‘Can I provide information to the farmers?’”

Stevens has argued that the world’s decisionmakers need to rely on climate models the same way farmers rely on weather reports, but that shift will require a concerted—and expensive—effort to create a kind of shared climate modeling infrastructure. He and Palmer proposed that the handful of nations with the capacity to do so should each build a central modeling agency—more farsighted versions of ECMWF in Europe or the National Weather Service in the United States—and actively work together to supply the world with reliable models that cover its range of policy needs.

“If the world invested a billion dollars a year, maybe split between five or six countries, this could revolutionize our capabilities in climate modeling,” said Palmer.

But some in the climate science community question whether higher-resolution models can really provide the information policymakers are looking for. Gavin Schmidt, director of the NASA Goddard Institute for Space Studies (GISS) and principal investigator of the GISS coupled ocean-atmosphere climate model, ModelE, said there may well be a ceiling to what we can predict about long-term variability in an ultimately chaotic climate system. One example, he said, is local rainfall 30 years from now, which can depend on the chaotic behavior of the circulation even in current models. “These trends are probably not predictable, and that won’t change with higher resolution.”

Schmidt pointed out that higher-resolution models can’t be run as often, making it harder to average out noise through a robust ensemble of repetitions. “Resolution is very, very expensive. With the same computational cost of one 1-kilometer climate model, you could be running tens of thousands to hundreds of thousands of lower-resolution models and really exploring the uncertainty.”

There just isn’t enough evidence that kilometer-scale models will produce different answers than existing models do, he said. “As a research project, I think these are great things to be doing. My biggest concern is that people are overpromising what’s going to come out of these things.”

A New Moon Shot?

NextGEMS is now trying out its model with a coupled ocean and atmosphere, running at a resolution of 2.5 kilometers for only a few months. Japanese researchers are working on models that resolve at only a few hundred meters globally, which Mieslinger gleefully called “absolutely crazy.” So far, none of this is being done on an exascale computer but, rather, on the petascale computers—less than half as fast—that are currently operational in Europe and Japan.

Atmosphere-focused climate models are now being run at 3-kilometer resolution on Frontier, the world’s first exascale computer, at the Oak Ridge National Laboratory in Tennessee. The U.S. Department of Energy (DOE) has been developing a program similar to DestinE for the past 10 years, preparing to have its Climate and Earth System model ready for this moment. The project, known as E3SM, for Energy Exascale Earth System Model, is specifically geared toward questions of importance to DOE, like how the production of bioenergy will affect land use, and in turn affect the climate system.

Frontier is expected to be able to produce a simulated year of 3-kilometer climate model data in less than a day. But even E3SM will need to share time on Frontier with DOE’s other heavy-lift applications, including those simulating nuclear explosions. Such national security needs have traditionally taken priority, but governments are tracking and predicting the results of climate change as matters of national security.

“From a scientific-technological perspective, climate is still treated as an academic hobby.”

In June 2022, Europe announced plans for its first exascale computer, JUPITER (Joint Undertaking Pioneer for Innovative and Transformative Exascale Research), to be shared among the continent’s many data-heavy applications. But Stevens said it will be difficult to provide reliable high-resolution climate data on an ongoing basis without exascale computers that are dedicated to the task full time and a centralized organization leading the way. He hopefully cited other cost-intensive human achievements—the CERN (European Organization for Nuclear Research) Large Hadron Collider, the James Webb Space Telescope—as precedents. And, he pointed out, governments have stepped up before. “NASA was created when we recognized the importance of space. The Department of Energy or similar institutions in other countries [were prioritized] to tame the atom,” he said. On the other hand, “from a scientific-technological perspective, climate is still treated as an academic hobby.”

Even if higher-resolution models can yield the climate answers we need, whether the world’s governments will choose to muster the collective resolve exemplified by the Space Race remains to be seen. Reminders of geopolitical barriers are everywhere—Palmer can’t apply for DestinE funding because the United Kingdom has left the European Union (“Brexit’s a nuisance,” he said), and the conflict in Ukraine has diverted attention from reducing our consumption of fossil fuel in the future to worrying about where we can get enough of it now. But, Palmer countered, the conflict has also made clear how much easier things would be if we didn’t need fossil fuels at all, and the urgency of getting serious about a stable clean energy grid.

That means high-resolution models, or at least the chance to see what they can do, can’t come soon enough.

Author Information

Mark Betancourt (@markbetancourt), Science Writer