A staggering number of peer-reviewed papers are published each year in environmental science, hydrology, geology, ecology, climatology, companion social sciences, and related fields. The vast majority of these papers represent high-quality contributions to our understanding of the world. Yet they build on bodies of work that are already astonishingly large, making it ever more difficult for established scientists to keep up and for young scientists to get up to speed on foundations and frontiers within their fields.

Considering that the pace of science and scientific publication is unlikely to slow, we need a better approach to synthesizing the wealth of available knowledge.

This publication overload hampers our ability to advance scientific frontiers, address societally important challenges, and support early-career scientists. It can also sometimes lead to researchers duplicating work, rediscovering previously published ideas, or, worse, perpetuating mistakes—inefficient uses of always-limited resources. Furthermore, geographically localized studies, which may be incremental in scope but provide critical context and comparisons for broader or more global studies in applied environmental science, are often missed.

Considering that the pace of science and scientific publication is unlikely to slow, we need a better approach to synthesizing the wealth of available knowledge. We propose that—and describe how—one such approach could involve a human-driven, machine-aided online synthesis tool that evolves over time and seamlessly connects large amounts of related research and information while preserving the richness of detail found in individual peer-reviewed papers.

A Publications Avalanche

Today more than 3 million peer-reviewed papers are published each year across all fields—some 500,000 in the United States alone [Jinha, 2010; Johnson et al., 2018; White, 2019]. The number of papers related to critical environmental concerns—drought, fire, and climate change, among others—is especially high. For example, using standard search engines to find academic peer-reviewed literature on “fire or wildfire” in the western United States turns up more than 20,000 papers, of which more than a thousand have been published per year since 2016. These publication rates preclude even within-field experts from staying up to date on all the work being done, which can hamper hypothesis evolution and limit the adoption of new observation and modeling technologies.

The burden of publication overload is often greatest for early-career scientists, who are simultaneously trying to establish their expertise and their careers [Atkins et al., 2020; Thakore et al., 2014]. Becoming an expert requires reading, understanding, and integrating others’ work. But knowing what to read, and in what order, can dramatically improve one’s grasp of key concepts. Without good mentors to help curate information, many early-career scientists are left to wander the halls of our digital libraries—often with the Google search bar their only guide—in the hope that they can identify and understand the key foundational and frontier knowledge they need. A lack of effective mentorship is a notable barrier to academic advancement and success, especially for individuals from underrepresented backgrounds [Deanna et al., 2022].

Publication overload is also a barrier to scientists receiving recognition for their work. This is a community-wide problem, but again, it may be particularly acute for early-career researchers. Even when scientists publish their work, it often goes unrecognized amid the “tide” of other new literature produced. The percentages of papers that are never cited are difficult to measure and vary by discipline, but estimates are often in the double digits.

Early in their careers, scientists often engage in more incremental research—for example, testing existing hypotheses in new locations or under new conditions, or applying and assessing emerging methodologies in innovative ways. This sort of incremental research is central to the scientific enterprise, but it is often published in discipline-specific journals rather than in higher-profile, multidisciplinary outlets. As a result, this research is often overlooked, especially by readers in other fields and specializations, and thus greatly undervalued.

Existing Initiatives Are Only a Beginning

Scientists have tried to remedy the problem of publication overload with various synthesis products and approaches.

Publication overload is not new. Scientists have tried to remedy the problem with various synthesis products and approaches. For example, review articles such as the Tamm Reviews, which cover research in forest ecology and management, typically distill findings from numerous studies related to a given theme. In addition, there are journals such as Wiley’s WIREs series that focus almost exclusively on review papers. There are also standards for high-quality systematic reviews such as those provided by Collaboration for Environmental Evidence. We have also built synthesis institutes. Good examples include the National Center for Ecological Analysis and Synthesis, the U.S. Geological Survey’s John Wesley Powell Center for Analysis and Synthesis, and the National Socio-Environmental Synthesis Center—all of which are increasingly focusing on producing synthesis products like review papers and databases.

The National Science Foundation (NSF) has programs like Research Coordination Networks that support groups of investigators as they coordinate and synthesize related research, training, and educational activities. Finally, governmental and nongovernmental organizations produce other synthesis materials and reports, such as California’s Climate Change Assessments and the Intergovernmental Panel on Climate Change’s reports. Similar in function but decidedly different in approach, there are data provisioning websites like Google Earth that provide data and model outputs relevant to core questions in environmental science.

These existing tools and initiatives clearly contribute to information synthesis, although the array of syntheses and their various products can themselves be overwhelming. Critically, these synthesis products are often static or are limited in scope, meaning users can easily mistake outdated syntheses for up-to-date understanding or misapply generalized findings to specific locations or circumstances where they aren’t relevant.

In addition, subsequent findings may diverge from working hypotheses in preexisting synthesis papers or add specificity to flesh out general principles (e.g., quantifying how warming temperatures relate to earlier snowmelt in a particular location). However, because synthesis papers are rarely revisited, this sort of evolution in the current understanding is easily overlooked or lost.

Synthesis papers and reports also typically focus on specific topics, but links to reviews of related topics are not consistently included, particularly if topics cross disciplines. For example, a review paper discussing the effectiveness of wildfire fuel treatment methods may not mention or link to reviews of relevant science, such as how climate affects fire risk.

Artificial Intelligence, with Human Guidance

If existing human-generated synthesis products cannot save us from publication overload, can artificial intelligence (AI) help? Indeed, it can [Matthews, 2021]. Machine learning–enabled products that automate searches and distill information are already available: Iris.ai, Semantic Scholar, Connected Papers, Open Knowledge Maps, and Local Citation Network, to name a few.

Nonetheless, extracting meaningful searches of publications around specific topics in environmental science remains challenging [Romanelli et al., 2021]. In part, this is because finding literature does not necessarily lead to understanding, particularly if searches yield hundreds of papers. Focusing only on highly cited papers may also be problematic, given that the reasons they are highly cited may not align with the goal of understanding [Romanelli et al., 2021]. For example, high citation counts may simply track topics of current general interest rather than advances in expert knowledge. In some cases, high citation counts may result from scientific disagreements playing out in the literature or where papers serve as oft-cited examples of discredited assumptions.

Similarly, automated mapping of domain knowledge by AI algorithms that cluster papers around semantic terms can highlight topical areas and show how trajectories of publications on particular topics evolve through time (e.g., charting numbers of papers related to dust on snow). But this clustering does not necessarily synthesize ideas about a topic [Borner and Polley, 2014; Franconeri et al., 2021; Lafia et al., 2021]. And highly generalized syntheses (such as what might emerge from ChatGPT) do not readily contribute to the more nuanced, detailed understanding that researchers need to help advance environmental science.

A dynamic online metasynthesis tool that makes finding, understanding, and updating science equitable and efficient would be a transformative solution.

A dynamic online metasynthesis tool that makes finding, understanding, and updating science equitable and efficient would be a transformative solution to address these shortcomings. Such a tool could combine the strengths of human-driven science syntheses with technical advances in visualization and AI. It could also evolve as our knowledge deepens and synthesize research using customizable searches while still preserving and providing the detail and context found in individual peer-reviewed papers when prompted.

Classic review papers organize disparate ideas into conceptual models. They highlight convergence and divergence around core hypotheses. They evaluate the techniques used in observational data collection, data analysis, and modeling. And they place specific papers into conceptual frameworks that guide understanding.

The ideal tool would retain these strengths while more thoroughly connecting review papers and reports across disciplines and topics. By using recent advances in AI, including natural language AI (i.e., ChatGPT) and visualization techniques such as “on the fly” rendering, we can imagine tailored user interfaces involving AI-aided searches with graphics and text that make traversing knowledge landscapes easy and efficient. These interfaces could, for example, guide more novice users to current conceptual models related to general topics (e.g., snow accumulation and melt) as starting points, whereas experts could specify a location- and scale-specific research hypothesis and be directed to related pages. However, active leadership by scientists would be the critical feature. The primary design of the knowledge synthesis (e.g., the conceptual models, hypotheses, and how current techniques can be applied to advance these) would be generated and updated by domain scientists.

Building this tool will require collaboration and partnerships among scientists, visualization experts, database specialists, ontologists (language engineers), and machine learning experts. And, we argue, if this collaborative process is led by and involves scientists at every step, the resulting product will better fit the needs of our community. Although improved private-sector products may emerge, such as a “better” Google Scholar or more detailed ChatGPT, these will not necessarily maintain the strengths of human-driven science syntheses.

From Concept to Reality

What might our proposed tool look like in practice? Overall, we envision linked, web-based pages that present conceptual diagrams and current working hypotheses (and counterhypotheses) related to particular research questions, as well as examples of evidence that confirms or disputes these hypotheses for particular locations and time and space scales. We note that finding exceptions to general rules—or quantifying the magnitudes of effects—in particular settings is often how environmental science advances. This information would all be linked to peer-reviewed papers.

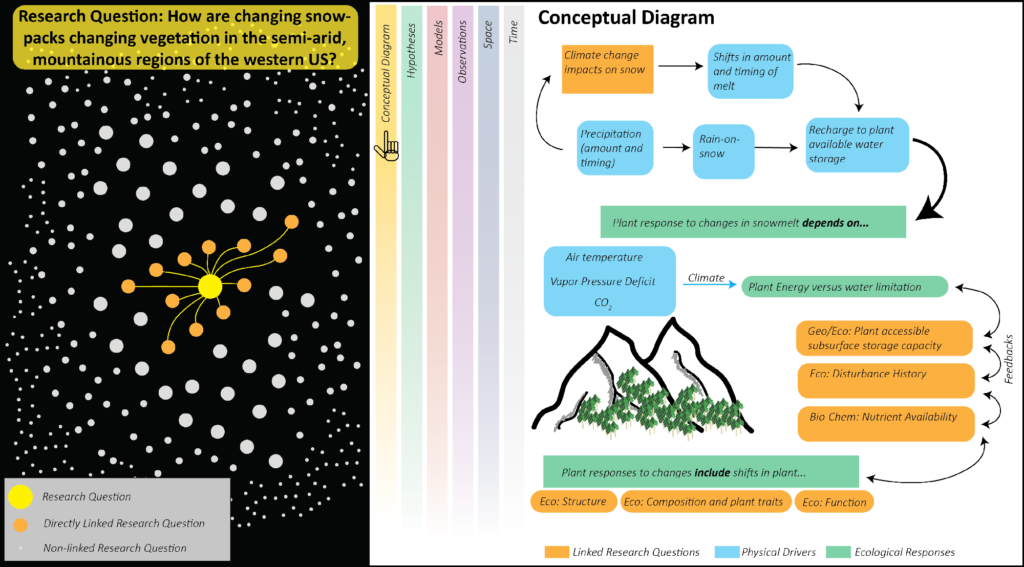

Figures 1 and 2 illustrate potential front-end pages focusing on the question of how changing snowpacks relate to changing vegetation in semiarid, mountainous regions of the U.S. West. A dashboard would allow users to move quickly among pages covering different aspects of this broad research question, and a navigation pane would show connections between the selected question and other related questions, such as how snowpacks, which store water for vegetation, are changing in the region.

Because scientific knowledge is most valuable when it is current, the system would require a robust process by which it could keep up with changes in contemporary understanding.

Because scientific knowledge is most valuable when it is current, the system would require a robust process by which it could keep up with changes in contemporary understanding. This process, more so than its design, would be the system’s key innovation. Developing the details of the updating process—including how often, by whom, and by what criteria it would be updated—would require careful thought and rigorous debate by the scientific community. And the system’s success ultimately would depend on scientists’ willingness to contribute. The more users, and the larger the updating community, the better the end product.

To maintain its credibility while also creating a flexible, dynamic, and accessible system, we envision leveraging existing peer review setups, which provide critical quality control on the science that is published. The design of the conceptual diagrams and hypothesis would require direct science community involvement—working group engagement would be critical here. We anticipate using iterative working group processes such as those used by the Intergovernmental Panel on Climate Change and leveraging existing community organizations such as AGU to accomplish this task.

Researchers who contribute to curation, conceptual model evolution, hypothesis development, or software development, or who create, contribute to, and maintain the tool, should receive formal recognition.

In developing the proposed tool, creating incentives to motivate participation will be important. For example, researchers who contribute to curation, conceptual model evolution, hypothesis development, or software development, or who create, contribute to, and maintain the tool, should receive formal recognition [Carter et al., 2021]. This push for recognition parallels similar trends in universities and funding agencies such as NSF that are expanding the scope of work they credit to include software and database development and other contributions.

The peer-reviewed paper, a 17th-century invention, has served science well. However, as scientific understanding of all manner of topics and questions evolves, we need a new system to access knowledge that provides expert-driven synthesis across many studies while preserving the hard-won details of individual studies.

As a first step, we suggest that communities of environmental scientists convene working groups to focus on designing a tool similar to what we have proposed here and, importantly, on the rules for how scientists would engage to support the tool’s continual evolution. Concurrently, these groups must partner with artificial intelligence and other specialists to leverage advances in visualization and information updating and searching capabilities. Agencies and organizations such as NSF and AGU should support these efforts by convening and funding these working groups, prototype development, and other community engagement efforts.

With the rapidly rising number of peer-reviewed papers and the proliferation of synthesis tools like ChatGPT, now is the time for these communities to recognize the limitations (and strengths) of current and emerging scientific dissemination and synthesis products, and to play a leadership role in developing new tools. We need a radical solution to publication overload that will help researchers—established and early-career alike—to keep pushing scientific frontiers.

References

Atkins, K., et al. (2020), “Looking at myself in the future”: How mentoring shapes scientific identity for STEM students from underrepresented groups, Int. J. STEM Educ., 7(1), 42, https://doi.org/10.1186/s40594-020-00242-3.

Borner, K., and D. E. Polley (2014), Visual Insights: A Practical Guide to Making Sense of Data, 297 pp., MIT Press, Cambridge, Mass.

Carter, R. G., et al. (2021), Innovation, entrepreneurship, promotion, and tenure, Science, 373(6561), 1,312–1,314, https://doi.org/10.1126/science.abj2098.

Deanna, R., et al. (2022), Community voices: The importance of diverse networks in academic mentoring, Nat. Commun., 13, 1681, https://doi.org/10.1038/s41467-022-28667-0.

Franconeri, S. L., et al. (2021), The science of visual data communication: What works, Psychol. Sci. Public Interest, 22(3), 110–161, https://doi.org/10.1177/15291006211051956.

Jinha, A. E. (2010), Article 50 million: An estimate of the number of scholarly articles in existence, Learned Publ., 23(3), 258–263, https://doi.org/10.1087/20100308.

Johnson, R., A. Watkinson, and M. Mabe (2018), The STM report: An overview of scientific and scholarly publishing, 5th ed., 212 pp., Int. Assoc. of Sci., Tech., and Med. Publ., The Hague, Netherlands, https://www.stm-assoc.org/2018_10_04_STM_Report_2018.pdf.

Lafia, S., et al. (2021), Mapping research topics at multiple levels of detail, Patterns, 2(3), 100210, https://doi.org/10.1016/j.patter.2021.100210.

Matthews, D. (2021), Drowning in the literature? These smart software tools can help, Nature, 597(7874), 141–142, https://doi.org/10.1038/d41586-021-02346-4.

Romanelli, J. P., et al. (2021), Four challenges when conducting bibliometric reviews and how to deal with them, Environ. Sci. Pollut. Res., 28(43), 60,448–60,458, https://doi.org/10.1007/s11356-021-16420-x.

Thakore, B. K., et al. (2014), The Academy for Future Science Faculty: Randomized controlled trial of theory-driven coaching to shape development and diversity of early-career scientists, BMC Med. Educ., 14(1), 160, https://doi.org/10.1186/1472-6920-14-160.

White, K. (2019), Publications output: U.S. trends and international comparisons, Sci. Eng. Indicators, NSB-2020-6, 35 pp., Natl. Sci. Found., Alexandria, Va., https://ncses.nsf.gov/pubs/nsb20206/.

Author Information

William Brandt, Scripps Institution of Oceanography, University of California, San Diego; and Christina Tague ([email protected]), University of California, Santa Barbara