Projections of climate change are based on theory, historical data, and results from physically based climate models. Building confidence in climate models and their projections involves quantitative comparisons of simulations with a diverse suite of observations. Climate modelers often consider information from well-established tests and comparisons among existing models to help decide on a new model version among multiple candidates.

Developers benefit the most from comparisons of their models with observations and other models when the results of such analysis can be made quickly available.

Climate model developers and those who use these models benefit from sharing information with each other. Both groups require access to the best available data and rely on open source software tools designed to facilitate the analysis of climate data. Developers benefit the most from comparisons of their models with observations and other models when the results of such analysis can be made quickly available.

Here we introduce a new climate model evaluation package that quantifies differences between observations and simulations contributed to the World Climate Research Programme’s Coupled Model Intercomparison Project (CMIP). This package is designed to make an increasingly diverse suite of summary statistics more accessible to modelers and researchers.

The Coupled Model Intercomparison Project

The results from CMIP are on the petabyte scale, with simulations contributed by tens of modeling groups around the globe.

Model intercomparison projects (MIPs) provide an effective framework for organizing numerical experimentation and enabling researchers to contribute to the analysis of model behavior. To improve our understanding of climate variability and change, CMIP coordinates a host of scientifically focused subprojects that address specific processes or phenomena, including clouds, paleoclimates, and climate sensitivity [Taylor et al., 2012; Meehl et al., 2014; Eyring et al., 2015]. The results from CMIP are vast, of petabyte scale, with simulations contributed by tens of modeling groups around the globe.

By adopting a common set of conventions and procedures, CMIP provides opportunities for a broad community of researchers to readily examine model results and compare them to observations. The success of this project has been directly responsible for enhancing the pace of climate research, resulting in hundreds of publications and a multimodel perspective of climate that has proven invaluable for national and international climate assessments.

Metrics Package Aids Accessibility

As a step toward making succinct performance summaries from CMIP more accessible, the Program for Climate Model Diagnosis and Intercomparison (PCMDI) at Lawrence Livermore National Laboratory has developed an analysis package that is now available.

The PCMDI Metrics Package (PMP) leverages the vast CMIP data archive and uses common statistical error measures to compare results from climate model simulations to observations. The current release includes well-established large- to global-scale mean climatological performance metrics. It consists of four components: analysis software, an observationally based collection of global or near-global observations, a database of performance metrics computed from all models contributing to CMIP, and usage documentation.

PMP [Doutriaux et al., 2015] uses the Python programming language and Ultrascale Visualization Climate Data Analysis Tools (UV-CDAT) [Williams, 2014; Williams et al., 2016], a powerful software tool kit that provides cutting-edge diagnostic and visualization capabilities. PMP is designed to enable potential users unfamiliar with Python and UV-CDAT to test their own models by leveraging the considerable CMIP infrastructure. Users with some Python experience will have access to a wide range of analysis capabilities.

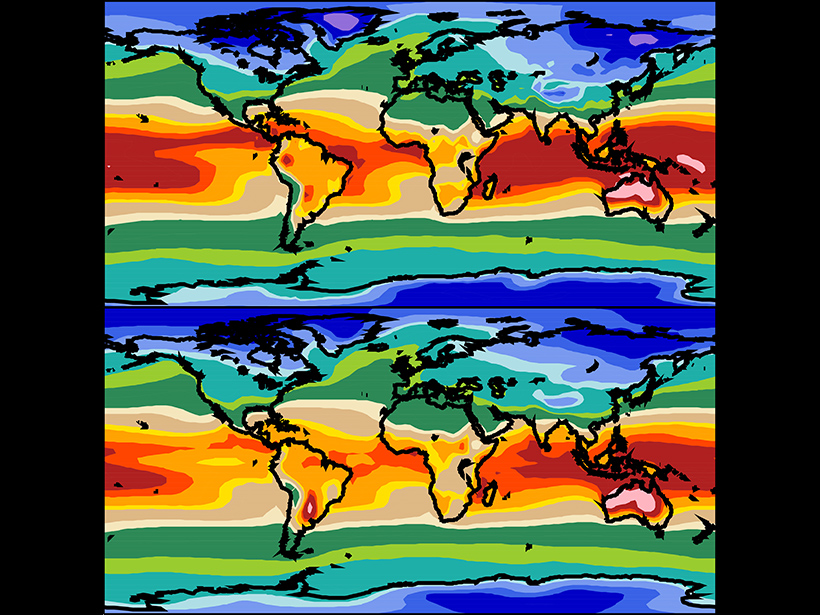

PMP enables users to synthesize model performance characteristics from dozens of maps and zonal average plots. The well-established quantitative tests of the seasonal cycle in the current release do not directly relate to the reliability of model projections but do represent important large-scale climatological features evident in observations [Flato et al., 2013]. Modelers often consider information of this kind along with numerous other diagnostics, which they combine with expert judgment to help decide on a new model version among multiple candidates.

CMIP as a Model Development Tool

By the time research results are published—often many months or even years after CMIP output is generated and becomes available—most model developers are already working on newer model versions that supersede the previous CMIP contribution. Modelers would benefit more directly from participation in CMIP if during the model development process the merits and shortcomings of their model relative to other state-of-the-science models were immediately apparent. The PMP database, when it is installed locally, enables modeling groups to rapidly compare their current model version(s) with CMIP models rather than await feedback from the external analysis community. This information can help identify deficiencies in a model, which can be useful in setting priorities for further model development work.

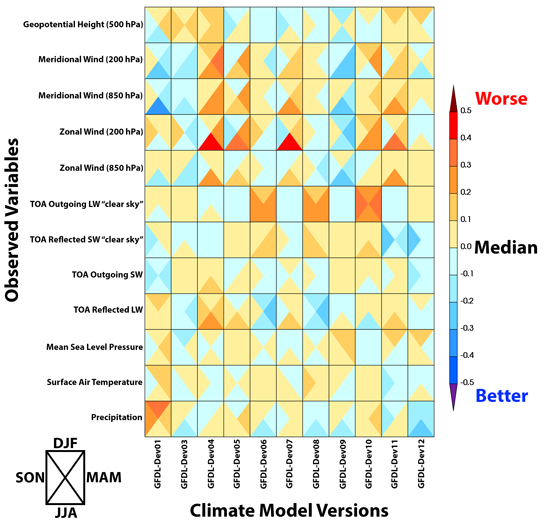

Modeling groups have already shown interest in PMP’s ability to compare multiple versions of a model under development as part of its evaluation procedure. Figure 1 provides an example of how results from PMP can be used to summarize the relative merits and shortcomings of different configurations of the same atmospheric model run with prescribed sea surface temperatures and sea ice (the protocol of the Atmospheric Model Intercomparison Project (AMIP)).

Users can tailor the PMP “quick-look” results like Figure 1 to compare various characteristics of their model versions. For example, contrasting the development improvements or setbacks from different model versions in relation to the distribution of structural errors in the CMIP multimodel ensemble can provide an objective assessment as to whether model performance changes are significant. Making such objective summaries routine will aid the development process and help modelers ensure that they do not overlook any hidden degradation in model performance during development.

Routine CMIP Evaluation Made More Accessible

Future phases will call for a small ongoing set of experiments that will be revisited each time a new model version is released.

Future phases of CMIP [Meehl et al., 2014; Eyring et al., 2015] will call for a small ongoing set of experiments that will be revisited each time a new model version is released. Included among these benchmark experiments, which are referred to as the CMIP DECK (Diagnostic, Evaluation and Characterization of Klima; Klima is German for climate), is an AMIP run and a coupled (atmosphere-ocean-land-ice) model preindustrial control run with no external forcings. (External forcings are climate factors that are not simulated but included in some experiments as a time-varying constraining influence based on data such as volcanic eruptions, man-made aerosols, and increasing atmospheric carbon dioxide.) A historically forced coupled run is also proposed as an additional benchmark experiment, although the external forcings applied to these runs are likely to evolve across CMIP generations.

The expectation of these benchmark experiments (DECK and historical) is that they will encourage increased emphasis on developing diagnostic capabilities that can be used to systematically evaluate model performance. Since PCMDI’s metrics package relies on the data conventions and standards used in CMIP, there is some assurance that it will be suitable and useful not only during the current phase of CMIP but indefinitely into the future.

Current and Future Releases of PMP

Version 1.1 of PCMDI’s Metrics Package (PMPv1.1) is publicly available, with functionality that is currently designed to serve modeling groups or individuals performing simulations. It provides well-established model climatology comparisons with observations, including the following:

- area-weighted statistics: bias, pattern correlation, variance, centered root-mean-square difference, and mean absolute error

- results for global and tropical domains, the extratropics of both hemispheres, departures from the zonal mean, and land- and ocean-only domains

- multiple observationally based estimates of each field tested, including top-of-the-atmosphere and surface radiative fluxes and cloud radiative effects, precipitation, precipitable water, sea surface and 2-meter temperature, mean sea level pressure, surface air wind (10 meters), temperature (2 meters), humidity (2 meters), upper air temperature, winds, and geopotential height

Additionally, metrics for the El Nino–Southern Oscillation recommended by the CLIVAR Pacific Basin Panel are available in the PMP development repository.

Community users of PMP can develop and include additional tests of model behavior and work with the PCMDI team to integrate these into the package. Future releases will include summary statistics for sea ice distribution, land surface vegetation characteristics (in collaboration with the International Land Model Benchmarking, ILAMB), three-dimensional structure of ocean temperature and salinity, monsoon onset and withdrawal, the diurnal cycle of precipitation, major modes of climate variability, and selected “emergent constraints” [Flato et al., 2013].

Anyone interested in using PMP should contact the development team ([email protected]) for information on the latest functionality and installation procedures, which are advancing rapidly. As we embark on developing PMP as a community-based capability, we would like to hear from anyone interested in working with us to include an increasingly diverse set of performance metrics that can be used for systematic evaluation of the CMIP DECK and other simulations.

Acknowledgments

The work from Lawrence Livermore National Laboratory is a contribution to the U.S. Department of Energy, Office of Science, Climate and Environmental Sciences Division, Regional and Global Climate Modeling Program under contract DE-AC52-07NA27344. We are grateful for our colleagues at the Geophysical Fluid Dynamics Laboratory, Institut Pierre-Simon Laplace, Commonwealth Scientific and Industrial Research Organisation, and National Energy Research Scientific Computing Center who have tested PMP and provided invaluable feedback for its improvement.

References

Doutriaux, C., P. J. Durack, and P. J. Gleckler (2015), PCMDI Metrics initial release, Zenodo, Geneva, Switzerland, doi:10.5281/zenodo.13952.

Eyring, V., S. Bony, G. A. Meehl, C. Senior, B. Stevens, R. J. Stouffer, and K. E. Taylor (2015), Overview of the Coupled Model Intercomparison Project Phase 6 (CMIP6) experimental design and organisation, Geosci. Model Dev. Discuss., 8, 10,539–10,583, doi:10.5194/gmdd-8-10539-2015.

Flato, G., et al. (2013), Evaluation of climate models, in Climate Change 2013: The Physical Science Basis. Contribution of Working Group I to the Fifth Assessment Report of the Intergovernmental Panel on Climate Change, edited by T. F. Stocker et al., pp. 741–866, Cambridge Univ. Press, Cambridge, U. K., doi:10.1017/CBO9781107415324.020.

Gleckler, P. J., K. E. Taylor, and C. Doutriaux (2008), Performance metrics for climate models, J. Geophys. Res., 113, D06104, doi:10.1029/2007JD008972.

Meehl, G. A., R. Moss, K. E. Taylor, V. Eyring, R. J. Stouffer, S. Bony, and B. Stevens (2014), Climate model intercomparison: Preparing for the next phase, Eos Trans. AGU, 95(9), 77–78, doi:10.1002/2014EO090001.

Taylor, K. E., R. J. Stouffer, and G. A. Meehl, (2012), An overview of CMIP5 and the experiment design, Bull. Am. Meteorol. Soc., 93, 485–498, doi:10.1175/BAMS-D-11-00094.1.

Williams, D. N. (2014), Visualization and analysis tools for ultrascale climate data, Eos Trans. AGU, 95(42), 377–378, doi:10.1002/2014EO420002.

Williams, D. N., et al. (2016), UV-CDAT v2.4.0, Zenodo, Geneva, Switzerland, doi:10.5281/zenodo.45136.

Author Information

Peter J. Gleckler, Charles Doutriaux, Paul J. Durack, Karl E. Taylor, Yuying Zhang, and Dean N. Williams, Lawrence Livermore National Laboratory, Livermore, Calif.; email: [email protected]; Erik Mason, Geophysical Fluid Dynamics Laboratory, Princeton, N.J.; and Jérôme Servonnat, Institut Pierre Simon Laplace des Sciences de l’Environnement Global, Université Paris–Saclay, Paris, France

Citation: Gleckler, P. J., C. Doutriaux, P. J. Durack, K. E. Taylor, Y. Zhang, D. N. Williams, E. Mason, and J. Servonnat (2016), A more powerful reality test for climate models, Eos, 97, doi:10.1029/2016EO051663. Published on 3 May 2016.

Text © 2016. The authors. CC BY-NC 3.0

Except where otherwise noted, images are subject to copyright. Any reuse without express permission from the copyright owner is prohibited.